Enter the Enterface

We're exiting the interface. Designing for conversational, agentic software.

I’ve come to the uncomfortable realization that designing software is now so much more than wireframing and mocking up in Figma. That we’re so rapidly moving from GUI (graphical user interface) to chat interface with open prompts and conversational design that there are almost no established patterns to model off of. That the barrier to entry to building software is dropping so fast — but also the complexity increasing so quickly — that I’m putting my son to bed and designing / coding almost every night for the past 13 months (essentially since Sonnet 3.5 upgraded to 3.7), often 10pm to 1am. I don’t want to. This is unsustainable. I love it. I’m confused, and just about everyone else I talk with in the field is too.

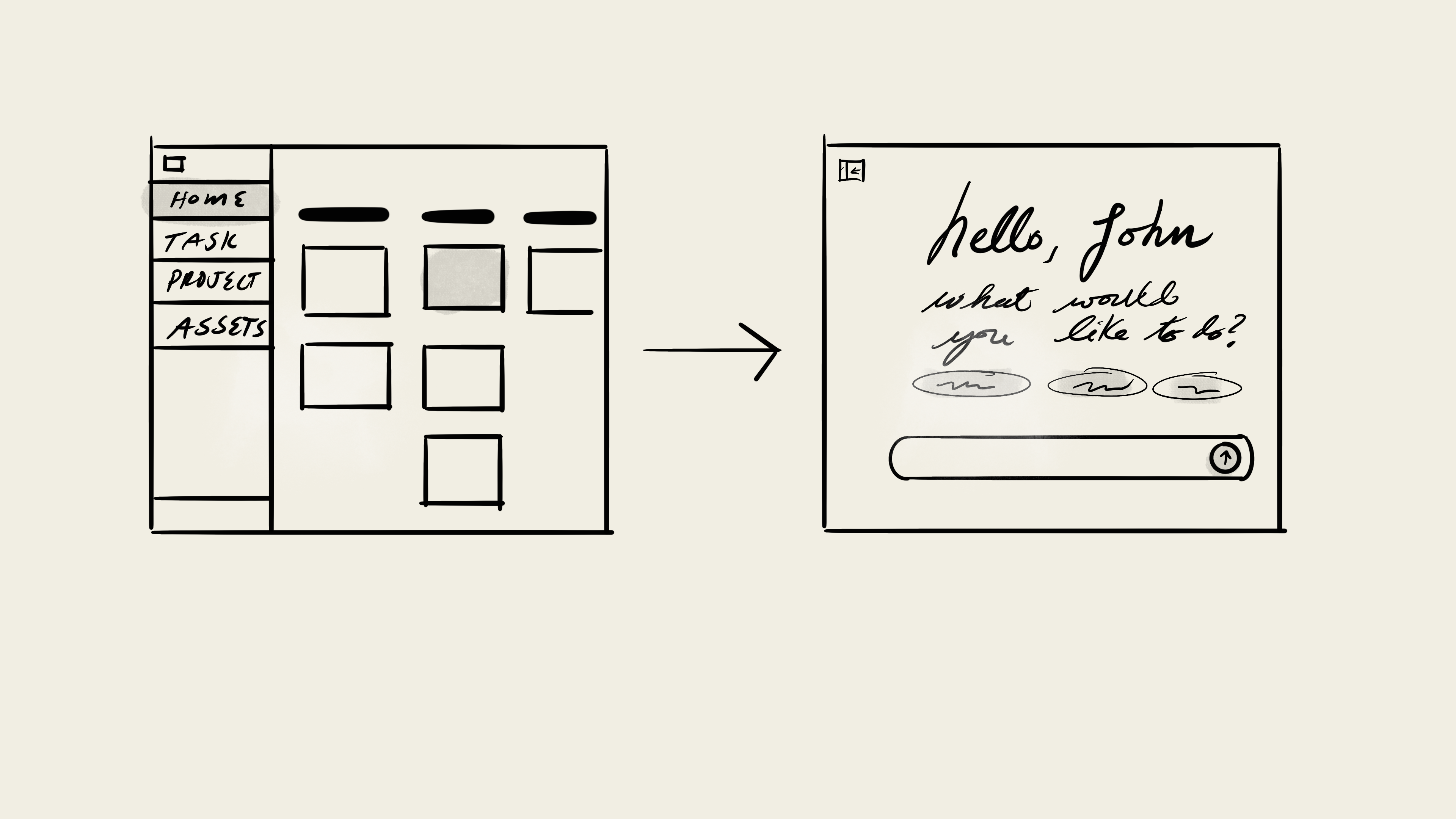

The joy of GUIs is that the affordances are obvious. You click a button to create a task. You type into a field and hit save; it saves it. The interface tells the user exactly what it can do, even if it takes a little while to navigate to what you need. The tools are all there, obvious behind tabs, dropdowns, buttons, and pages. I’m a super visual person. This level of abstraction makes sense! I can draw it, show it to someone, we click through the workflows. I can get sign off. Quality is obvious, because it’s in the visual craft.

We’re exiting the interface.

Welcome to the enterface.

Design is dead! Software is over! Figma is caput. Whatever the LinkedIn garbage is saying. It’s all false. It’s all true. Let’s focus on what’s actually happening. We’re seeing conversation — LLM / agent-driven — interaction moving to the front and center of nearly every interface.

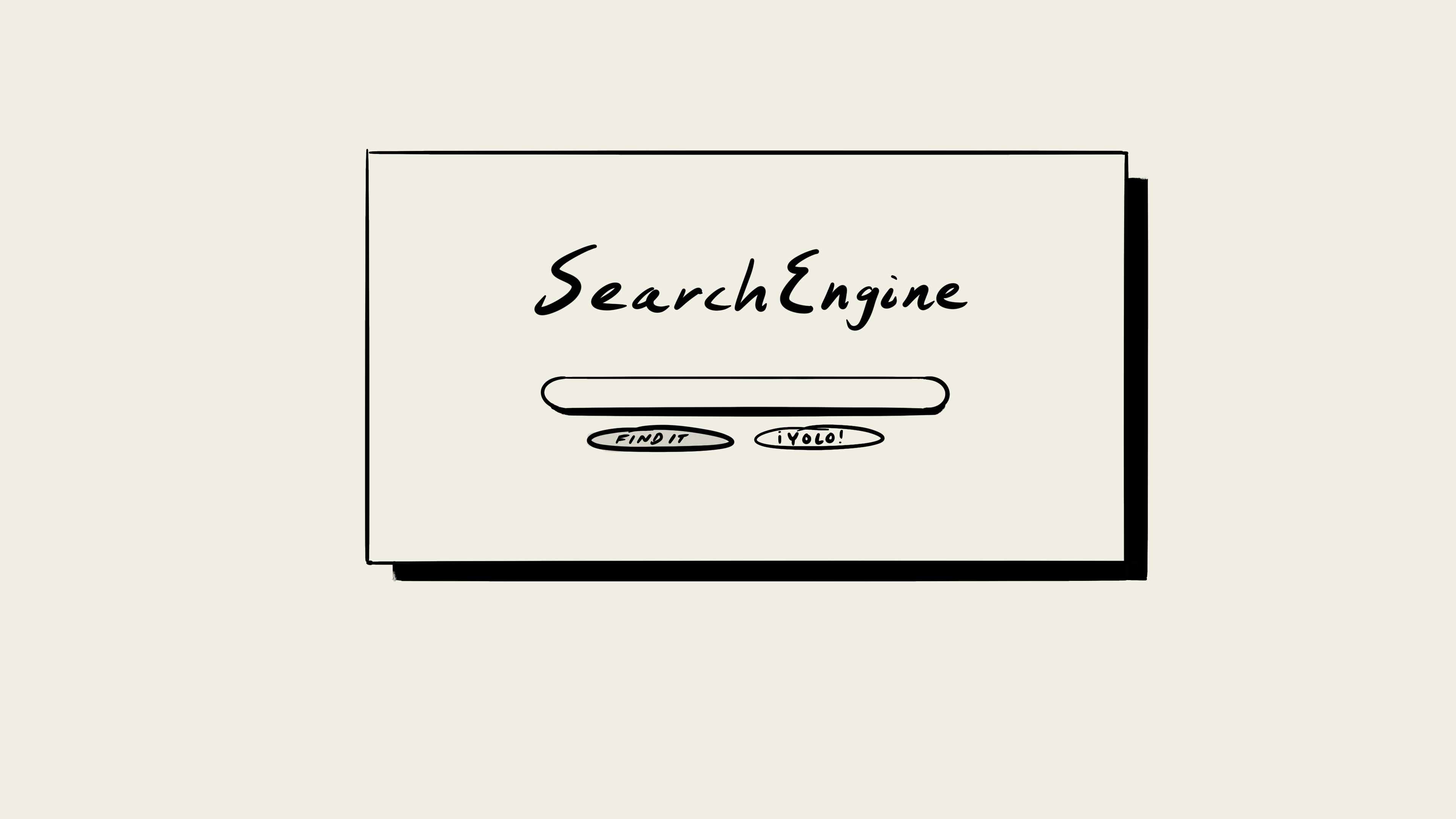

An open chat field. What do you want? prompt. The user types in — or speaks — and hits enter. The enterface.Core: Open prompt, with some recommendations. Chat back and forth, like good ‘ol AIM. Feedback for what the software is doing. Add-ons: UI on the fly. Interaction, feedback of what’s actually happening. Follow-up (plan mode), output feedback for what you like better.

The challenge with the enterface is that the user needs to know what it can do, and what it can do well / not well. Namely, how a given model actually performs and the edges of its abilities. How to spot when it gets stuck, is hallucinating. This is learned through experience or reading technical docs. Most people don’t want that; they just want to get the task done. Almost every add-on is a bandaid to a workflow.

Check out the convergence of this design pattern.

Task / project management: Asana, Linear, Jira all going from task dashboard → home / landing as chat.

AI native workflow: Sana, Harvey, already there. ChatGPT, Anthropic — it’s the main pattern.

Google the OG “ask us anything” with a “I’m feeling lucky” aka --dangerously-skip-permissions

I’m seeing a ton of commentary about calling this design approach “Lazy PM Work” and “A Trend” given the uptick in this design pattern. I think these comments are wrong and grossly oversimplifying what’s actually going on here.

- Lazy. No, but it is rearview mirror pattern if this is your destination.

- Trend. No, I think it’s a stepping stone to what’s about to come.

How it looks over time.

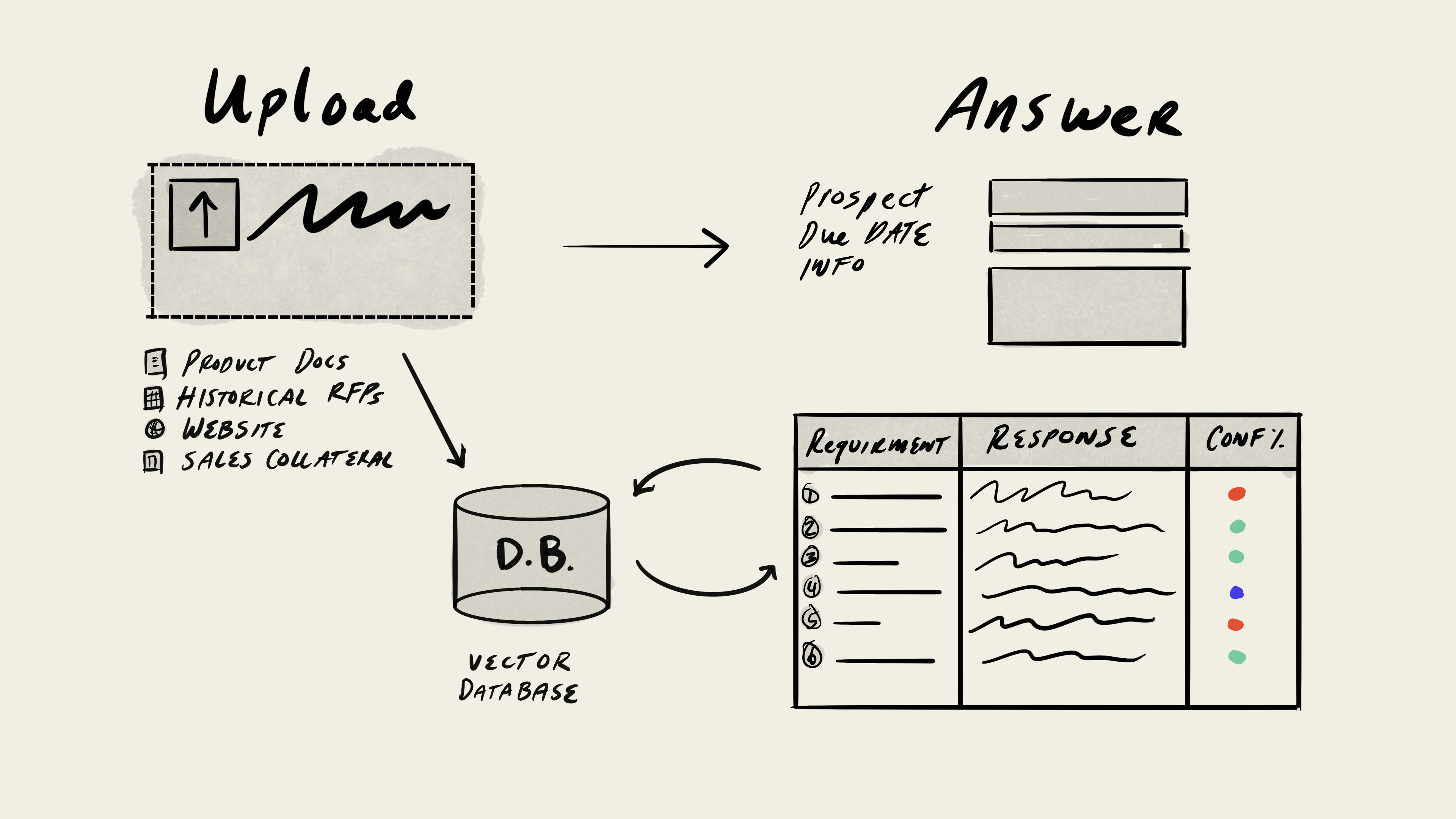

Let me show you how I think this evolution is going to play out over “design time” across an extremely narrow and specific use case I’m super familiar with: the RFP Automation solution.

From

This is the traditional B2B SaaS answer (with AI sprinkle) to the RFP problem.

- Onboard — Upload historical RFPs and product library. Vectorizes in the backend.

- Answer workflow

- Get RFP from a prospect

- Upload

- Answer

- Tag, save, rework

- Download

- Send back

UI, AI in a box for a very specific thing.

To

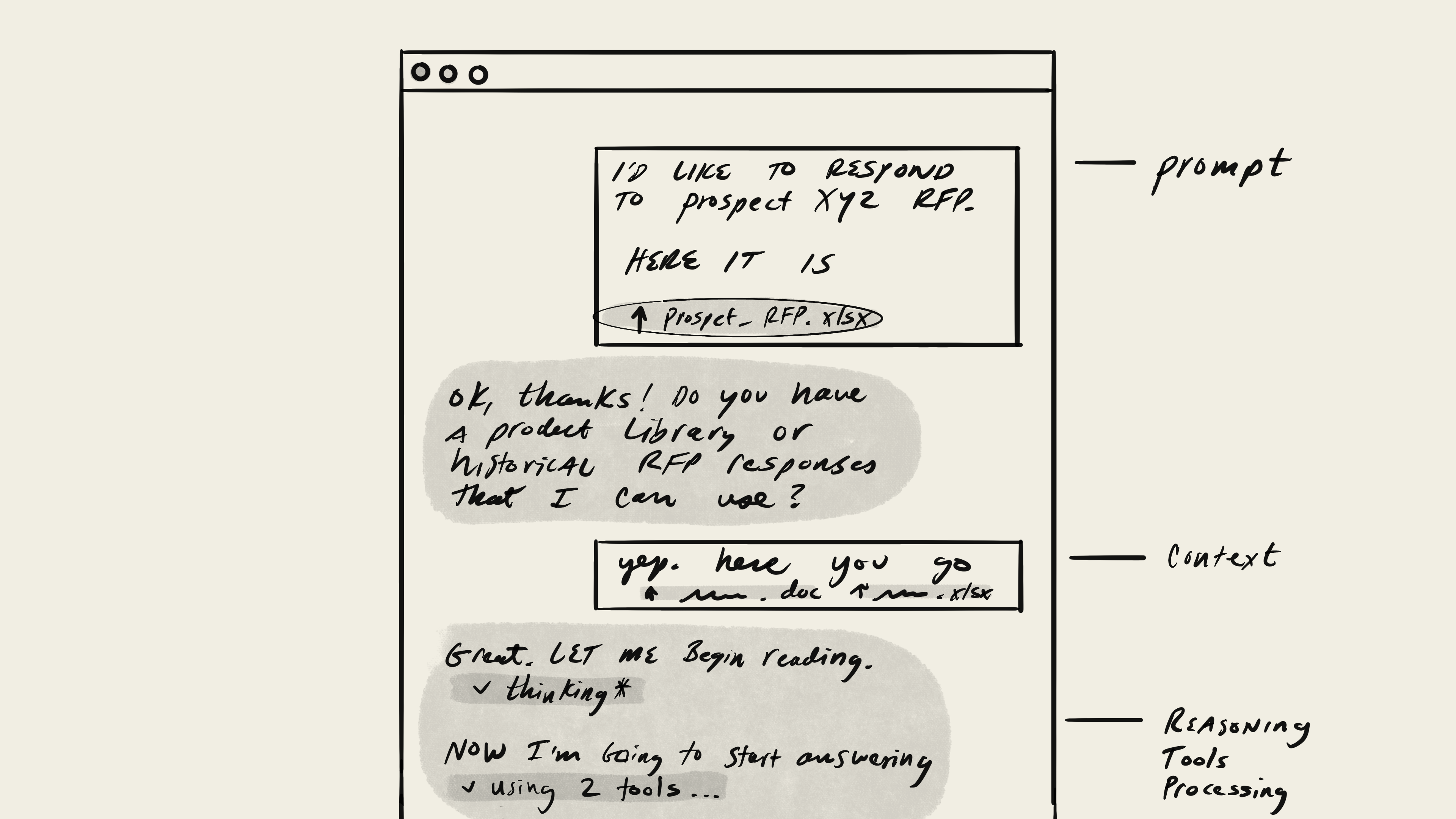

Pull up your frontier model front end of choice — it’s a chatbot: Claude, ChatGPT, Gemini.

- Prompt what to do (extra credit for having this saved as a Gem, GPT, Project folder / instruction)

- Upload the context (each time, or it’s in a library)

- It’ll tell you what it’s doing. Builds all the scripts, logic, etc on the fly. There’s a back and forth. It almost always gets it wrong on the first attempt (no one-shotting for this use case)

- Maybe it’ll show you an artifact (building micro software or a document) so you can check and quality control.

- Ask it to export into a format you need. Usually CSV, documents or decks — it’s getting better at this, but not great.

Really heavy human in the loop. Non-deterministic workflow and outcome. This is probably why we’re seeing no / low / negative ROI on this type of workflow. So up pops Harvey for lawyer contract / documents and other vertical niche SaaS.

Edge

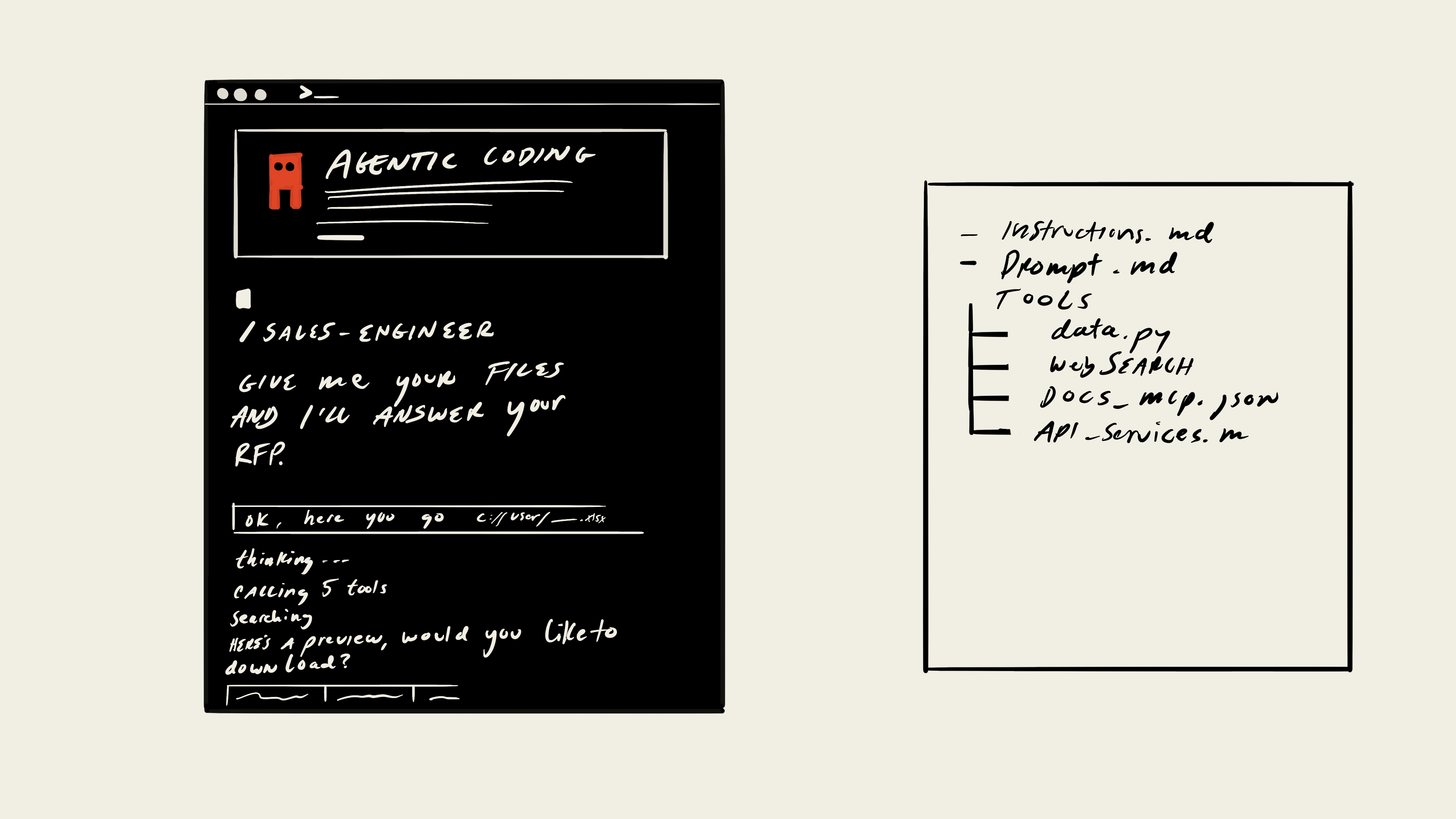

Claude Code Skills & Tools, like the skills.sh package.

Pull up the terminal. Claude Code — enter into a /sales-engineering workflow.

It calls the RFP workflow (the LLM can reason that) and reason on top of the tool calls that it needs to make given its system prompt and tools, which is a Python script for data manipulation given I/O.

Future

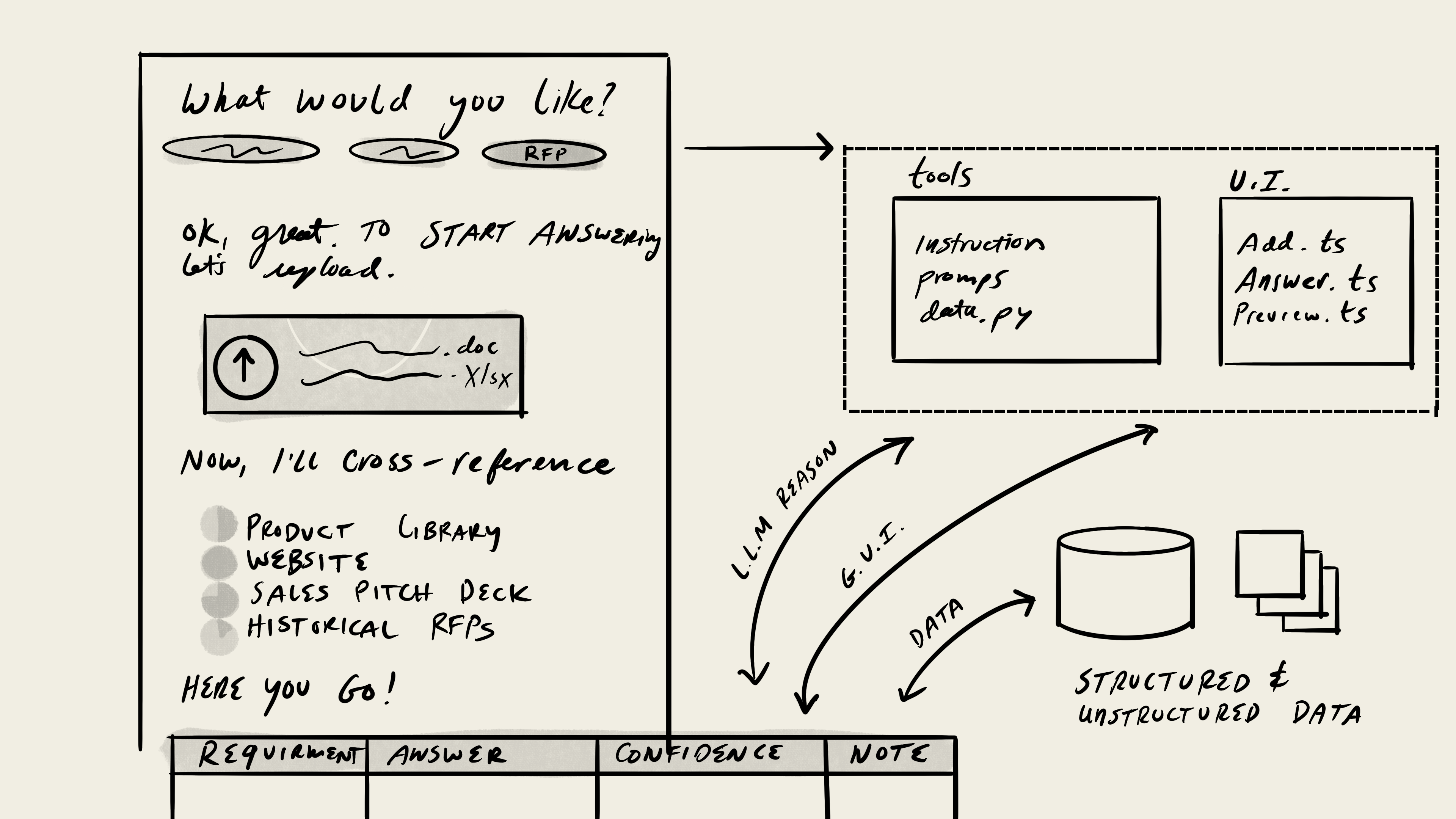

Assuming the models will continue to improve (it’s been not only true but accelerating this past year), we’ll continue to get smarter about how to interact with the models (Cowork, inline UI).

The agent (and collection of agents it bundles with) will be the core way to interact with software / the computer. The runtime/session is the key workflow, like running any software program.

Here are my two divergent, but perhaps bundled, views of what’s going to happen.

1. From the chat, human-in-the-loop

There will be data / business logic (like the Python script mentioned in the last section) mixed with components (the coding agents really like TypeScript and specifically libraries like shadcn/ui which is React (.ts) + Tailwind CSS). And while these will be defined in the backend, the agent will be able to shift the needs of the parts to create custom workflows and evolve. This is part of the “malleable software” definition, but I think it’s going to extend a bit into runtime.

2. Complete offload

This is the AGI fever dream for this use case.

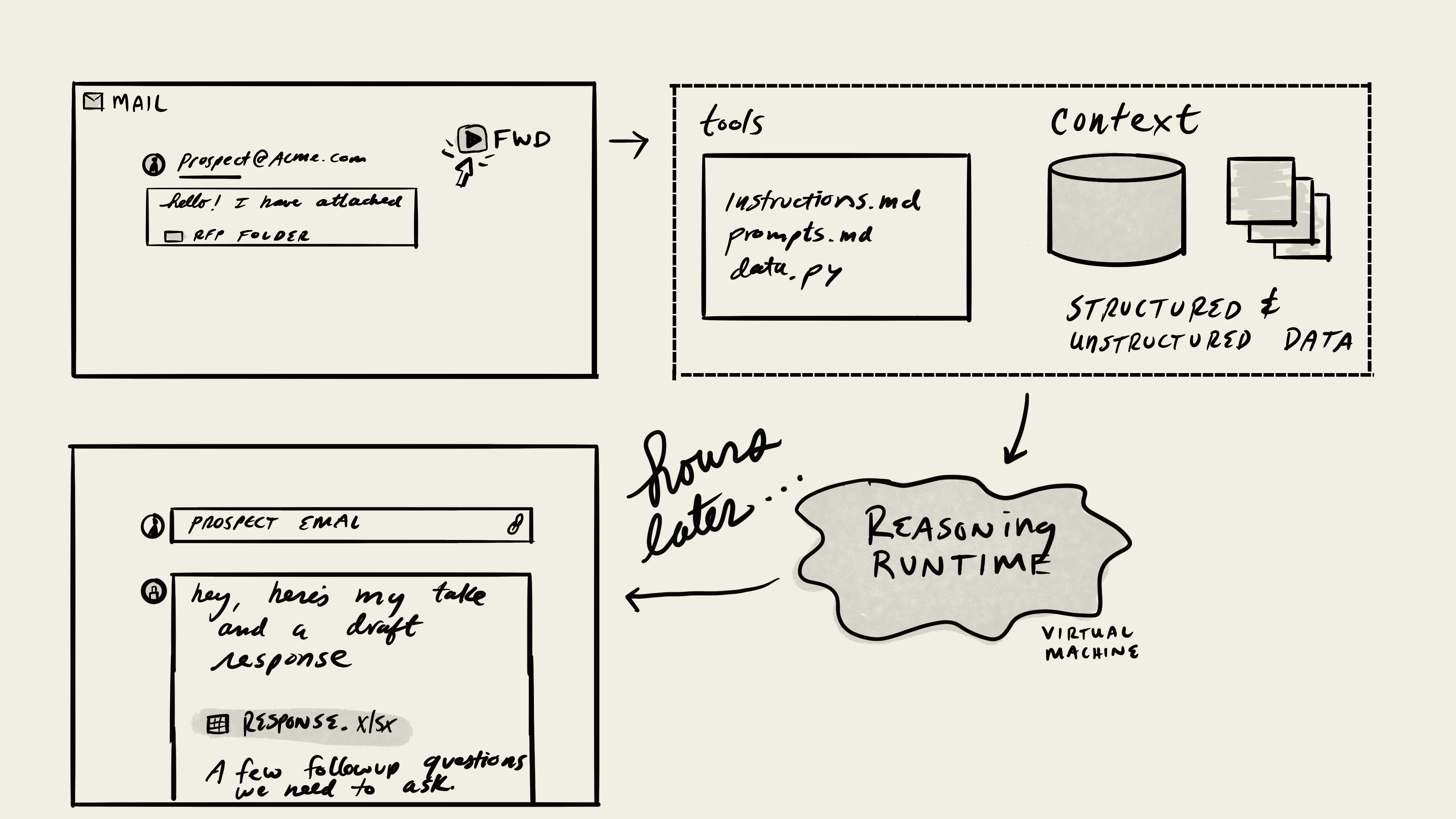

Phase I. An Account Executive or Sales Engineer gets an email from a prospect. Forwards it to the SE/RFP AI Agent, they get a response back in the future (30 mins to a day). Review it, share any feedback, change things around, send back to the prospect.

Phase II. The SE/RFP AI Agent is installed in the AE’s email system. This is one of many agents they have installed. It watches for email that matches the pattern. Downloads the docs on a virtual machine, runs the process and searches all the internal docs, codebase, Slack, etc for the answers, drafts the responses with open questions, and sends back to the deal team on the account.

In both cases, what’s the interface? We’ve moved beyond the chat as the portal. It’s getting very close to how humans interface with each other via digital environments. It’s not Human-Computer Interaction (HCI), it’s Computer-Human Interaction, even moving towards Computer-Computer Interaction.

I don’t know how to design for this. I don’t know if I want to design a future like this. The automation is obvious.

Use cases like this are perfect. This is one of the shittiest parts of an SE job and bringing this type of technical expertise to an AE is an obvious empowerment of that role.

But what if a support rep likes answering basic requests and helping people as an entry level role (Fin, Sierra). What if a lawyer enjoys the routine work of standard contracts (Harvey). It’s uncomfortable to displace at the speed this might happen. When we’re no longer designing for humans (HCI), and we’re designing for computers, what’s the delight in that?

I love to build and create. And this entire designing in and with AI has opened up my creative floodgate like I haven’t felt in years. It also comes with consequences; I’m not quite sure what they will be. So we need to watch extremely closely. This is likely bigger than the automation of the loom, and while amazing production — and even art — came out of it, so did crazy government intervention and protecting the profits of the factory owners. We’re in a political climate in the United States of America that has made it clear they are going to keep AI unregulated and protect the interest of the billionaires.